Ready to Benefit from AI and Automation? Schedule Your Complimentary AI Strategy Session →

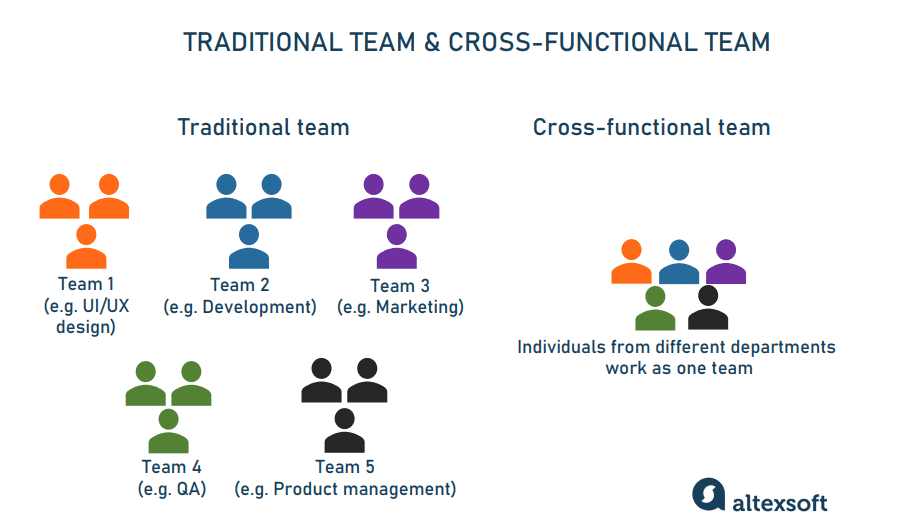

Operational performance increasingly depends on how well artificial intelligence is integrated across departments. AI influences customer engagement, internal reporting, compliance monitoring, forecasting accuracy, workflow automation, and executive decision-making. Because AI affects multiple functions simultaneously, no single department can manage it alone.

As CEO, I saw strong momentum across departments adopting AI independently. Marketing was improving content workflows, operations was streamlining reporting, and finance was enhancing forecasting accuracy. The progress was meaningful, yet I recognized we needed cross-functional coordination to align governance, share insights, and scale AI responsibly across the entire organization, to unlock wider-impact. That realization led us to formalize a structured AI task force.

Organizations that rely solely on IT or data science teams to drive AI initiatives often experience bottlenecks, misalignment, and inconsistent adoption. Sustainable AI transformation requires coordinated ownership across leadership, operations, compliance, HR, finance, and frontline teams.

A cross-functional AI task force provides this coordination structure. When designed correctly, it aligns governance, execution, and business outcomes. When designed poorly, it becomes a discussion forum without authority or measurable impact.

At AlignAI.dev, we help companies design AI governance and execution structures that operate with clarity, accountability, and measurable performance outcomes. This blog outlines how to build a cross-functional AI task force that drives real results rather than theoretical planning.

In this blog, we will:

Explain why AI requires cross-functional governance

Present current research on AI adoption and coordination gaps

Define the roles required inside an effective AI task force

Outline a structured implementation model

Provide governance, accountability, and performance measurement guidance

Present a practical Align → Automate → Achieve framework for execution

AI adoption is increasing rapidly, but organizational coordination is lagging behind adoption, creating gaps in value realization, execution, and risk control.

Despite widespread AI usage, many companies aren’t seeing measurable returns. A 2026 report from PwC at the World Economic Forum Davos 2026 session found 56% of companies report getting no measurable benefit from their AI investments. Leaders attribute these failures to a lack of foundational alignment, strategy, governance, and cross-team coordination, not just technology adoption.

This shows that adopting AI is not enough, it must be supported by organizational structures that ensure consistent execution and strategic alignment.

Broad adoption masks deep fragmentation. While 88% of organizations now use AI in at least one function, most are still in early or siloed stages, and only a small fraction (6% approx) scale beyond pilots with real financial impact.

In other words, AI use is pervasive, but coordination across departments is limited, and isolated adoption does not translate into organization-wide performance.

Multiple industry analyses show that standalone AI projects fail at high rates when ownership, strategic alignment, and cross-team support are absent:

One industry survey shows 70–80% of AI projects fail to meet their objectives or deployment targets, often due to a lack of collaboration between technical and business teams.

Another research predicts between 60% and 90% of AI projects are at risk of failure by 2026 if governance and integration challenges aren’t addressed.

These failure rates aren’t due to weaknesses in AI technology; they reflect organizational barriers, chief among them fragmented ownership and poor collaboration.

Industry insights consistently link cross-functional collaboration with better AI outcomes:

Research indicates that teams with structured cross-functional collaboration report a 55% reduction in security vulnerabilities and a 42% improvement in compliance audit outcomes for AI systems, compared to siloed approaches.

Collaborative design and testing also correlate with higher adoption rates, improved user satisfaction, and reduced feature abandonment, demonstrating that coordination improves both technical quality and organizational uptake.

These findings show that cross-functional AI teams don’t just reduce risk, they materially improve performance outcomes.

Reports on AI readiness highlight that organizational factors, such as unclear ownership and lack of integrated processes, are bigger barriers than technical limitations:

Research shows that although AI use is common (over 50% adoption in many companies), most organizations still struggle to move beyond early experimentation because teams lack governance, coordination, and operational clarity.

This means technology alone does not drive results. Coordination through a cross-functional structure is necessary to turn adoption into business impact.

AI systems touch legal compliance, privacy, security, workflows, customer outcomes, and financial reporting. When governance is limited to technical teams, critical risks often go unaddressed:

A major IT governance study found that only 7% of organizations have fully embedded AI governance in their development processes, and just 20% have formal cross-department governance groups; most initiatives remain siloed with minimal legal, HR, or ethics involvement.

Without cross-functional oversight, companies expose themselves to regulatory, reputational, and operational risk even as AI use expands.

AI adoption is widespread, yet most organizations:

Struggle to unlock measurable business impact

Fail to coordinate deployment across functions

Lack formal governance and risk controls

See high project failure rates without cross-team design

Miss opportunities to accelerate value delivery

A cross-functional AI task force solves these issues by aligning governance, operations, change management, compliance, and business outcomes, ensuring AI initiatives succeed not just technically, but organizationally.

Before defining what works, it is important to identify what causes failure:

No executive sponsorship

No defined decision rights

Undefined scope

No measurable KPIs

Overemphasis on tools instead of workflows

Governance addressed after deployment

No integration with HR or training

An effective AI task force operates as a decision-making and execution body, not a discussion committee.

A high-performing AI task force includes defined representation from the following roles:

Provides authority, budget alignment, and strategic direction. AI initiatives without executive sponsorship stall during scaling.

Ensures AI initiatives improve throughput, reduce friction, and align with workflow realities.

Oversees integration, system architecture, data pipelines, and cybersecurity alignment.

Ensures AI deployments meet regulatory obligations, privacy standards, and contractual safeguards.

Global frameworks such as the European Commission AI Act require risk categorization, documentation, and human oversight for certain systems, reinforcing the importance of compliance representation.

Drives AI literacy, workforce enablement, and change management.

Validates ROI models, cost controls, and financial forecasting impacts.

Represent real operational workflows and ensure AI initiatives solve practical problems.

Each member must have:

Defined decision authority

Clear accountability

Role-specific KPIs tied to AI impact

Creating a cross-functional AI task force requires more than assembling representatives from different departments. Without structure, authority, and measurable outcomes, task forces default to discussion groups that generate recommendations without execution.

Organizations that operationalize AI successfully treat it as an enterprise system governed by accountability, workflow integration, and performance metrics.

At AlignAI.dev, we implement a structured framework that transforms AI governance from fragmented oversight into coordinated execution:

Align → Automate → Achieve

This model ensures that the AI task force operates with clarity, decision authority, measurable impact, and cross-functional trust.

Before launching pilots or selecting tools, the task force must align on business priorities, governance standards, workflow scope, and ownership.

This phase answers a foundational question:

What enterprise outcomes will the AI task force be responsible for improving, and how will success be measured?

Define the AI mandate at the enterprise level

Establish clear decision rights and escalation paths

Identify cross-functional workflows with high impact

Formalize governance and compliance guardrails

Secure executive sponsorship and budget alignment

The task force clarifies the performance metrics AI initiatives must influence.

Examples include:

Reducing operational cycle times

Increasing reporting accuracy

Improving cross-department visibility

Accelerating customer response speed

Lowering manual coordination overhead

Increasing forecasting precision

Each outcome must tie to quantifiable KPIs. AI activity without measurable objectives creates ambiguity.

These metrics become the performance contract of the task force.

The task force conducts a structured review across departments to identify:

Where handoffs break down

Where data is duplicated across systems

Where manual reconciliations consume time

Where reporting delays occur

Where approvals stall execution

This audit reveals enterprise friction points that no single department can resolve independently.

High-priority workflows are selected based on:

Frequency

Revenue impact

Compliance risk

Customer experience influence

Scalability potential

Each represented function articulates:

Current AI usage

Operational constraints

Compliance obligations

Data sensitivities

Risk tolerance thresholds

These sessions prevent siloed AI deployment and establish shared understanding across leadership, operations, IT, finance, HR, and legal.

The result is a unified AI roadmap rather than departmental experimentation.

The task force formally defines:

Approved AI platforms

Data classification standards

Vendor review procedures

Human-in-the-loop thresholds

Audit documentation requirements

Decision-making authority matrix

Every initiative must have:

A business owner

A technical owner

A compliance reviewer

A performance measurement lead

Authority is documented, not implied.

Alignment must reflect how AI impacts each function.

Enterprise AI strategy validation

Performance KPI prioritization

Risk oversight reporting

Investment allocation decisions

System integration architecture

API and data pipeline validation

Cybersecurity compliance

Access control management

Regulatory classification review

Contractual AI clauses

Documentation standards

Audit trail requirements

Throughput optimization workflows

Bottleneck identification

Process automation candidates

AI literacy enablement programs

Workforce policy updates

Change management frameworks

ROI modeling

Budget allocation tracking

AI Automation cost-benefit analysis

By the end of the Align phase, the AI task force has:

A documented charter

Defined KPIs

Governance boundaries

Workflow priorities

Decision rights

Executive endorsement

The task force transitions from concept to accountable structure.

With alignment established, the task force moves into structured execution. This phase converts strategy into measurable pilot implementations.

Deploy cross-functional AI pilots

Integrate AI into real workflows

Maintain compliance and audit visibility

Build operational trust

Selected workflows are converted into structured AI-enabled flows.

Examples:

Data collection → AI analysis → executive summary → review → action

System monitoring → anomaly detection → recommendation → human approval → execution

Each pilot must include:

Defined inputs

Defined outputs

Clear ownership

Performance baseline metrics

AI Automation begins with precision.

AI systems are configured to:

Generate structured reports

Trigger cross-system updates

Surface risk alerts

Consolidate multi-source data

Recommend actions within predefined parameters

Human override mechanisms remain mandatory in risk-sensitive workflows.

Oversight preserves accountability.

AI becomes embedded into:

CRM systems

ERP platforms

HRIS workflows

Financial dashboards

Executive reporting environments

Integration reduces tool fragmentation and improves adoption.

The task force monitors:

User engagement

Workflow reliability

Output consistency

Risk incidents

The task force conducts:

Weekly pilot reviews

Compliance validation checks

Data security confirmations

Performance tracking updates

Governance operates in parallel with implementation.

Capability | What It Enables | Business Impact |

Cross-functional AI visibility | Unified performance reporting | Faster executive decisions |

Structured workflow automation | Reduced manual coordination | Increased throughput |

Real-time anomaly detection | Early risk mitigation | Lower compliance exposure |

Documented oversight controls | Safe scaling | Regulatory resilience |

As AI automation stabilizes, organizations observe:

Reduced cross-team friction

Fewer approval bottlenecks

Increased reporting accuracy

Higher executive confidence in data

The Achieve phase converts pilot success into institutional capability.

The AI task force transitions from experimentation to sustained enterprise governance.

Quantify measurable business impact

Scale validated workflows

Formalize governance maturity

Embed AI into operating culture

The task force tracks:

Adoption rates across departments

Time saved per workflow

Cycle time reduction

Error rate improvements

Cost efficiency gains

Compliance audit readiness

Results are reported to executive leadership using standardized dashboards.

This data validates the task force’s mandate.

Successful pilots expand to:

Adjacent business units

More complex workflows

Broader data environments (within governance controls)

Additional geographic or regulatory contexts

Scaling is phased and documented.

As organizational trust increases:

AI Automation thresholds expand

Oversight becomes more targeted

Documentation standards strengthen

Internal audit integration deepens

Governance evolves alongside capability.

AI becomes:

Part of leadership review cycles

Embedded in onboarding programs

Included in operational KPIs

Recognized as a strategic capability

New AI initiatives route automatically through the task force governance model.

The structure becomes durable.

A cross-functional AI task force succeeds when:

Its mandate is measurable

Governance is defined upfront

Authority is documented

Workflows are prioritized strategically

AI Automation is controlled

Performance impact is transparent

The Align → Automate → Achieve model ensures that AI governance evolves into enterprise execution capability.

At AlignAI.dev, we apply this framework to help organizations build AI task forces that operate with structure, authority, and measurable business impact. The result is coordinated AI adoption that strengthens operational performance, compliance confidence, and cross-functional trust.

AI now influences nearly every operational layer inside modern organizations. A cross-functional AI task force provides the structure required to coordinate governance, workflow redesign, adoption, and performance measurement.

Organizations that build AI task forces with:

Executive sponsorship

Defined decision rights

Measurable KPIs

Governance clarity

Structured scaling frameworks

are positioned to operationalize AI responsibly and effectively.

At AlignAI.dev, we help leadership teams design and implement cross-functional AI governance and execution structures using the Align → Automate → Achieve framework. Our approach ensures AI becomes a coordinated enterprise capability that strengthens performance, accountability, and long-term resilience.

If your organization is preparing to formalize its AI governance and execution strategy, book a Complimentary 30-minute AI Strategy Session with AlignAI.dev to begin building a cross-functional AI task force that delivers measurable results.